📦 Business Model Moat: The Long-Tail Victory of OTS (Off-The-Shelf)

To understand why Chenbro can survive and thrive in an environment surrounded by strong competitors (such as Foxconn and Quanta, which both have their own chassis factories), the key lies in its business model: the OTS (Off-The-Shelf) strategy.

Most system manufacturers (ODMs) engage in JDM (Joint Design Manufacturing), where they custom-build exclusive chassis for a single major client (such as Meta or Google). This is like "bespoke suits," involving large volumes but a single client, and high mold costs.

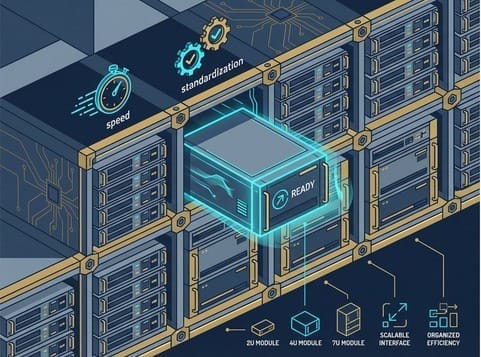

Chenbro is different. Not only does it do JDM, but it also boasts a robust OTS product line. This is like "ready-to-wear clothing," where it pre-designs chassis modules that meet standard specifications and sells them off the shelf.

In the AI era, this strategy has demonstrated astonishing explosive power:

- Speed (Time-to-Market): For small and medium-sized CSPs, second-tier enterprise clients, or System Integrators (SIs) who lack the capability to open their own molds or are in a hurry to ship AI servers, waiting 3-6 months for mold development is not an option. Chenbro's OTS chassis allow them to "buy and install," quickly seizing AI business opportunities.

- Long-Tail Effect: While the volume from a single client may not be as large as Meta's, thousands of enterprises worldwide require AI servers. Through the OTS model, Chenbro captures this massive long-tail market, which is why its gross profit margin can consistently remain higher than its peers.

- Extension to Customized Racks: According to the latest industry analysis, Chenbro is extending this standardization advantage to the rack level. This means it can offer not just individual chassis, but complete cabinet-level structural solutions, further expanding its potential market.

🧩 NVIDIA MGX's Divine Assist: A Match Made in Heaven for Modularity

If OTS is Chenbro's internal strength, then the NVIDIA MGX (Modular Grid Architecture) architecture is its external booster.

In the past, server specifications were chaotic, with each vendor having its own design. But in the AI era, NVIDIA introduced the MGX architecture, attempting to "modularize" server design. MGX breaks down servers into standard building blocks (such as GPU modules, CPU modules, and power modules), allowing manufacturers to freely assemble them according to their needs.

This is a perfect match for Chenbro:

- Victory of Standardization: The core logic of MGX is "standardized interfaces." This is precisely Chenbro's area of expertise. Chenbro quickly followed suit, developing various chassis compatible with the MGX architecture (such as 2U, 4U, 7U).

- Increased Penetration: Through MGX certification, Chenbro can more smoothly integrate into NVIDIA's ecosystem. This not only consolidates its position in general servers but also significantly increases its market share in the HGX (high-end GPU server) market.

- Shipment Momentum: Industry data indicates that bolstered by the increased shipments of HGX and MGX projects, Chenbro's server product shipments are strong. This year's HGX server shipments are expected to reach 90,000 to 100,000 units, representing an annual increase of 40%.

🌬️ Physical Challenges: The Steel Engineering Solution for "Weight" and "Heat"

In AI servers, chassis face two unprecedented physical challenges, which is where Chenbro's technical expertise truly shines:

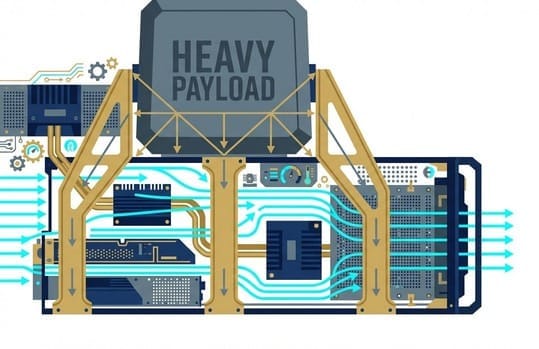

1. Counteracting Gravity: Anti-Sagging

A traditional server weighs approximately 15 kg, but an AI server fully loaded with H100/B200 can weigh 50~80 kg, or even more.

If the chassis lacks sufficient structural strength, it will sag in the middle due to gravity after being installed in a rack. This can lead to catastrophic consequences: motherboard bending, circuit breaks, and poor connector contact.

Chenbro utilizes high-strength steel and special structural mechanics designs (such as reinforcing ribs and stress distribution structures) to ensure the chassis maintains micron-level flatness even under heavy loads. This is crucial for the protection of L6 substrates.

2. Guiding Thermal Flow: Precise Airflow Calculation

Although liquid cooling is a trend, air cooling still plays a significant role during the current transitional period.

In high-density 4U or 7U chassis, how can the cold air drawn in by fans precisely flow over the heat sinks of each GPU without creating turbulence or dead spots?

Chenbro's chassis are not just metal plates; they are "airflow channels" simulated by CFD (Computational Fluid Dynamics). What should the perforation rate be? How should the air duct be designed? These all require deep thermal engineering experience. Even in the liquid cooling era, the internal space configuration and piping routing within the chassis still test the capabilities of structural design.

📊 The Starting Point of 2026: From Recovery to Outbreak

Looking back at January 2026 revenue, the figure of NT$2.65 billion not only showed a minimal month-on-month decrease (only -2%) but also an astonishing year-on-year increase of 137%. This conveys three clear industry signals:

- Sustained AI Demand: The demand for GPU/ASIC AI servers did not cool down due to holidays; instead, it continued to heat up.

- General Server Recovery: In addition to AI, orders for general-purpose servers are also recovering. This is Chenbro's foundational business, and its recovery provides stable cash flow.

- Better than Expected: January's performance already reached 43%~46% of the market's first-quarter estimate, meaning that first-quarter revenue is highly likely to exceed market expectations, laying a strong foundation for the entire year of 2026.

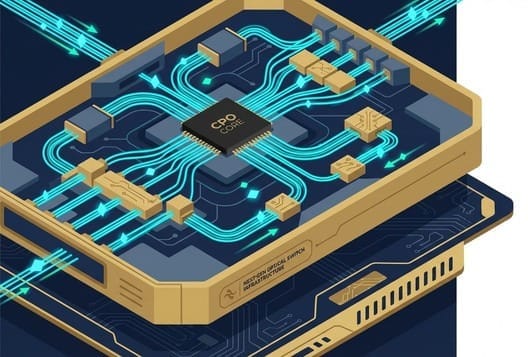

🔌 From Chassis to Switches: The Proving Ground for Co-Packaged Optics (CPO) Entry

In rack-scale architectures like NVL72, besides compute trays, another core component is the Switch Tray. In the past, switch chassis were the domain of networking equipment manufacturers. However, in the AI era, the thermal power consumption of switches has surged, and their structure is increasingly resembling servers, which provides an opportunity for Chenbro to enter this market.

According to the latest in-depth industry analysis, Chenbro has achieved significant breakthroughs:

- Victory in 3U Switch Trays: Chenbro has become the primary chassis supplier for NVIDIA's 3U switch trays. This is not an ordinary switch; it is a critical hub carrying NVSwitch cores and responsible for GPU interconnection.

- Laying the Groundwork for CPO (Co-Packaged Optics): The report further reveals that Chenbro's product design already encompasses the future CPO architecture. When optical modules are directly packaged next to the chip, the chassis's thermal management and optical fiber routing will face new challenges, and Chenbro has taken the lead in positioning itself for this.

- AI Inference Storage Platform: In addition to computing and networking, Chenbro has also entered NVIDIA's AI Inference Storage Platform. This demonstrates that its product line has fully expanded from "computing" to "transmission" and "storage."

🛡️ GB300 Strategic Expansion: From "Single" to "Diversified"

In the GB200 generation, Chenbro's customer base was relatively concentrated. However, in the upcoming GB300 generation, we observe a significant trend of "customer diversification." Industry intelligence indicates that while Chenbro might have primarily served one major client in the GB200 phase, the number of clients will increase to three in the GB300 phase.

This means:

- Market Share Expansion: As more CSPs or OEMs adopt the GB300 architecture, Chenbro's penetration rate will further increase.

- ASIC Project Support: Besides NVIDIA's product line, Chenbro is also actively collaborating with CSPs' ASIC teams (such as AWS's Trainium/Inferentia) to develop specialized chassis. New non-server products have already entered the POC (Proof of Concept) stage and are expected to materialize in the second half of 2026. This allows Chenbro to capture both the GPU and ASIC markets.

🏭 Malaysia New Plant: Key to Mass Production in 2026 Q3

Finally, we need to focus on Chenbro's "physical footprint." Amidst Sino-US geopolitical tensions, CSP clients strongly demand "China+1" or "Taiwan+1" production capacity redundancy. Chenbro's new plant in Malaysia is precisely designed to meet this demand.

- Mass Production Schedule: Expected to officially commence mass production in the third quarter of 2026 (3Q26).

- Strategic Significance: This factory is not just for chassis production; it is also designed to cooperate with clients for cabinet product assembly and shipment.

- Proximity Service: Malaysia is gradually becoming a data center hub in Southeast Asia. By establishing a factory there, Chenbro can serve European and American CSP clients with data centers in the region, significantly shortening delivery times and reducing logistics costs. This will be a crucial driver for Chenbro's revenue surge in the second half of 2026.

In-depth Research · Quantitative Perspective

Want to gain more quantitative research insights into semiconductors?

【Insight Subscription Plan】Break Free from Retail Investor Mindset: Build Your Alpha Trading System with "Quantitative Chips" and "Consensus Data"EDGE Semiconductor Research

📍 Series Map — Navigate the Complete EDGE Semiconductor Research →