Positioning: The Sixth Battle Zone is an all-out war after "runaway power consumption." As single GPUs approach 1000W–2000W and cluster sizes reach tens of thousands of units, data centers are forced to evolve from computing factories into energy and thermal engineering systems.

Opening: Scaling Up Ten Thousandfold

In the recently concluded Fifth Battle Zone (Packaging and Testing), we followed the most advanced AI chips through a microscopic ordeal akin to hell.

There, we saw a chip subjected to a several-day burn-in in a 125°C oven; we saw Chroma's machines, during SLT (System Level Test) drills, having to use a 50-millisecond ice water "magic" to curb the chip's instantaneous heat surge.

In the labs and test facilities, we successfully preserved the life of this chip.

But now, please expand your mental spatial scale ten thousandfold in an instant.

When this superchip, with power consumption reaching 1000W (Blackwell generation) and potentially exceeding 2000W (future Rubin generation), is no longer lying solitarily on a test socket, but tens of thousands, or even hundreds of thousands, of them are densely packed into the same server room, forming a massive GB200 NVL72 rack cluster...

This data center is no longer a traditional server room. It is fundamentally a gigantic "nuclear reactor"!

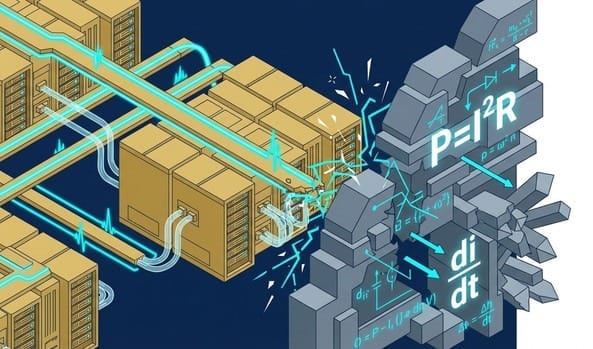

Here, Moore's Law (chips getting smaller and more power-efficient) has completely failed. The entire tech industry is hurtling at 300 kilometers per hour, directly colliding with a "physical wall" that is challenging for fundamental human science to overcome.

This wall is built from two of the coldest fundamental laws of physics: Joule's Law (thermal energy) and Faraday's Law of Electromagnetic Induction (transient current).

🔥 Core Insight (The Power Crisis): Joule's Law Cornered

Let's do some harsh accounting.

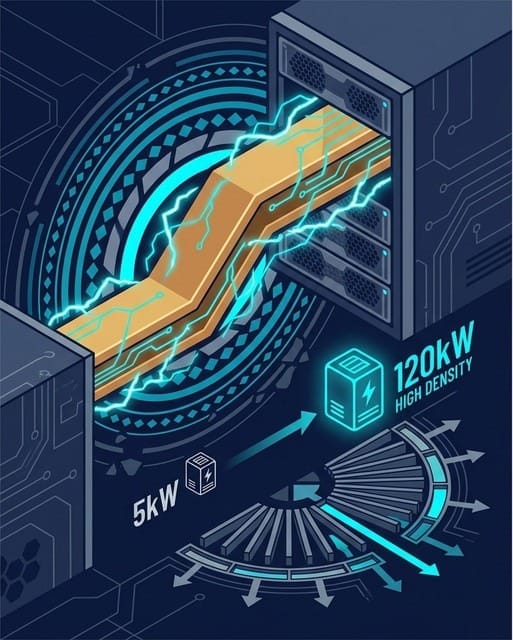

In the past cloud era, a rack filled with traditional CPU servers had a total power consumption of about 5kW to 10kW. This is comparable to having two or three air conditioners and an oven running at home simultaneously; traditional server room air conditioning and copper wire distribution were entirely adequate.

However, in the AI era, NVIDIA's GB200 NVL72 rack has a single rack power consumption that directly surges past 120kW! This is more than 12 times the previous amount!

According to Joule's Law ($P = I^2 R$, heat power is proportional to the square of the current), which we learned in high school, if we maintain the traditional rack's 12V or even 48V DC voltage architecture, to deliver 120kW of power, the main busbar in this rack must withstand a terrifying current of up to 2500 Amperes!

What does 2500 Amperes mean? A typical household's main circuit breaker is only 50 to 100 Amperes.

Under such massive current, even if the busbar is made thicker than an adult's arm, the waste heat generated by internal resistance ($I^2 R$ loss) as current passes through will cause the busbar to run a high fever, potentially melting adjacent insulation materials and motherboards.

Not to mention, fans simply cannot dissipate this amount of heat, and the high-speed operation of the fans themselves will consume an additional 20% of the rack's power.

Conclusion: We are facing an absolute physical crisis of "energy delivery and heat dissipation."

⚡ Physical Main Line: Lethal di/dt and Stringent Power Sequencing

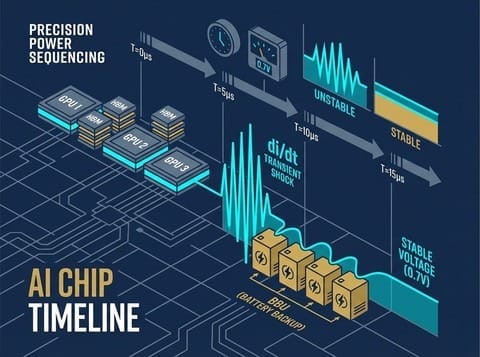

Beyond "excessive total power consumption," AI chips possess an even more lethal characteristic: the violent nature of instantaneous power draw.

In electronics, we use $di/dt$ (rate of current change) to describe this phenomenon.

When a GPU is in standby mode, it might only consume tens of watts; but when a neural network suddenly receives a massive LLM (Large Language Model) inference task, these tens of billions of transistors will all wake up within one microsecond (one-millionth of a second), instantaneously drawing thousands of watts of power!

This is akin to millions of households in a city simultaneously turning on their faucets at the exact same second.

What happens to water pressure (voltage)? It plummets instantly! In circuit theory, this is known as $dv/dt$ (voltage drop / transient voltage drop).

AI chips have extremely stringent requirements for voltage stability. If the standard power supply is 0.7V, and the voltage momentarily drops below 0.65V, this GPU, worth tens of thousands of US dollars, will directly "crash" due to logical signal errors.

In an AI training cluster with hundreds of thousands of GPUs in series, if just one chip crashes, the entire model training node must roll back (Checkpoint restart), wasting millions of dollars in time and electricity costs.

To solve this problem, system manufacturers must implement extremely stringent "Power Sequencing":

- The CPU, GPU, HBM memory, and NVLink switch chips on the server motherboard must never power on simultaneously.

- They must be awakened one by one, according to microsecond-level precise timing, to prevent instantaneous power drain.

Simultaneously, to avoid wasting any power during voltage conversion, power modules have been forced to completely phase out traditional diodes and adopt the highly complex "Synchronous Rectification (SR)" technology, pushing power conversion efficiency stubbornly close to the physical limit of over 98%.

🗺️ Overview of the Sixth Battle Zone: An All-Encompassing Extreme Arms Race

As "computing power" becomes a core bargaining chip in great power competition, cloud giants cannot halt their progress due to "physical walls."

To break the curse of Joule's Law and solve the fear of di/dt crashes, the entire server hardware industry has been forced to launch an unprecedented "heavy-duty extreme arms race."

In this Sixth Battle Zone, we will present an in-depth analysis of four core battles for you:

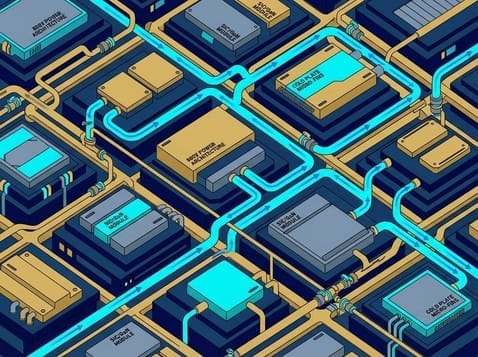

- Deconstructing the revolution from the macro grid to the rack, understanding how Google and Meta are aggressively pushing HVDC (High-Voltage Direct Current) directly into server rooms to eliminate "conversion waste heat."

- Comparing Taiwan's two power supply giants: Delta Electronics (2308)'s comprehensive infrastructure empire versus Lite-On Technology (2301)'s high-power-density assassin attack.

- Revealing NVIDIA's ultimate ambition, drawing inspiration from electric vehicles: the "800V Rack Revolution."

- Understanding how BBUs (Backup Battery Units, such as AES-KY, Sinbon Electronics) transform from blackout backup to "active life preservers" against di/dt.

- When voltages soar to 800V and currents surge to thousands of Amperes, traditional silicon-based MOSFETs become inadequate.

- Delving into the microscopic battlefield next to the chip, understanding how SiC (Silicon Carbide) and GaN (Gallium Nitride) are carving out their territories.

- Analyzing the "power phase revolution," and how TLVR (Trans-Inductor Voltage Regulator) and a large number of high-end MLCCs / Tantalum Capacitors staunchly prevent voltage collapse.

- When 1000W becomes the lifeline for air cooling, parasitic fan power consumption becomes unbearable.

- Starting with "pump-out resistant TIM thermal interface materials" and IHS heat spreaders (Kenmec).

- Striking directly at the throat of liquid cooling: micro-skived cold plates (Auras Technology, AVC Technology), and CDUs (Coolant Distribution Units) and QD (Quick Disconnect) couplings (CPC, LOTES) where not a single drop of water can leak.

- Pursuing the ultimate thermal black technology: MCLid (Micro-Channel Lid), and the final battle of Immersion Cooling, caught at the PFAS chemical environmental red line.

At the end of this sixth part, we will conduct a final roundup.

In this heavy-equipment transition wave involving hundreds of billions of US dollars, who holds the ultimate Specification Power?

- Is it NVIDIA, which dominates chips?

- Is it CSP cloud giants, who proposed the OCP open architecture?

- Or is it Vertiv and Delta Electronics, with their unrivaled integrated capabilities in "Power & Cooling Co-design"?

We will outline the pinnacle power pyramid of this industry chain for you.

In-depth Research · Quantitative Perspective

Want more quantitative research insights on semiconductors?

[Insight Subscription Plan] Bid Farewell to Retail Investor Mentality: Build Your Alpha Trading System with "Quantitative Chips" and "Consensus Data"EDGE Semiconductor Research

📍 Series Map — Navigate the Complete EDGE Semiconductor Research →